|

When reading some observer's diagnoses of what ails the United States, one can get the impression that Americans are living in an unprecedented age of public scientific ignorance. There is reason, however, to wonder if people today are really any more ignorant of facts like water boiling at lower temperatures at higher altitudes or if any more people believe in astrology than in the past. According to some studies, Americans have never been more scientifically literate. Nevertheless, there is no shortage of hand-wringing about the remaining degree of public scientific illiteracy and what it might mean for the future of the United States and American democracy. Indeed, scientific illiteracy is targeted as the cause of the anti-vaccination movement as well as opposition to genetically modified organisms (GMOs) and nuclear power. However, I think such arguments misunderstand the issue. If America has a problem with regard to science, it is not due to a dearth of scientific literacy but a decline in science's public legitimacy.

The thinking underlying worries about widespread scientific illiteracy is rooted in what is called the “deficit model.” In the deficit model, the cause of the discrepancy between the beliefs of scientists and those are the public is, in the words of Dietram Scheufele and Matthew Nisbet, a “failure in transmission.” That is, it is believed that negligence of the media to dutifully report the “objective” facts or the inability of an irrational public to correctly receive those facts prevents the public from having the “right” beliefs regarding issues like science funding or the desirability of technologies like genetically modified organisms. Indeed, a blogger for Scientific American blames the opposition of liberals to nuclear power on “ignorance” and “bad psychological connections.” It is perhaps only a slight exaggeration to say that the deficit model depicts anyone who is not a technological enthusiast as uninformed, if not idiotic. Regardless of whether or not the facts regarding these issues are actually “objective” or totally certain (both sides dispute the validity of each other’s arguments on scientific grounds), it remains odd that deficit model commentators view the discrepancy between scientists’ and the public’s views on GMOs and other issues as a problem for democracy. Certainly they are correct that it is preferable to have a populace that can think critically and suffers from few cognitive impairments to inquiry when it comes to wise public decision making. Yet, the idea that, when properly “informed” of the relevant facts, scientifically literate citizens would immediately agree with experts is profoundly undemocratic: It belittles and erases all the relevant disagreements about values and rights. Such a view ignores, for instance, the fact that the dispute over GMO labeling has as much to do with ideas about citizens’ right to know and desire for transparency as the putative safety of GMOs. By acting as if such concerns do not matter – that only the outcome of recent safety studies do – the people sharing those concerns are deprived of a voice. The deficit model inexorably excludes those not working within a scientistic framework from democratic decision making. Given the deficit model’s democratic deficits as well as the lack of any evidence that scientific illiteracy is actually increasing, advocates of GMOs and other potentially risky instances of technoscience ought to look elsewhere for the sources of public scientific controversy. If anything has changed in the last decades it is that science and technology have less legitimacy. Indeed, science writers could better grasp this point by reading one of their own. Former Discover writer Robert Pool notes that the point of legal and regulatory challenges to new technoscience is not simply to render it safer but also more acceptable to citizens. Whether or not citizens accept a new technology depends upon the level of trust they have of technical experts (and the firms they work for). Opposition to GMOs, for instance, is partly rooted in the belief that private firms such as Monsanto cannot be trusted to adequately test their products and that the FDA and EPA are too toothless (or captured by industry interests) to hold such companies to a high enough standard. Technoscientists and cheerleading science writers are probably oblivious to the workings and requirements for earning public trust because they are usually biased to seeing new technologies as already (if not inherently) legitimate. Those deriding the public for failing to recognize the supposedly objective desirability of potentially risky technology, moreover, have fatally misunderstood the relationship between expertise, knowledge, and legitimacy. It is unreasonable to expect members of the public to somehow find the time (or perhaps even the interest) to learn about the nuances of genetic transmission or nuclear safety systems. Such expectations place a unique and unfair burden on lay citizens. Many technical experts, for instance, might be found to be equally ignorant of elementary distinctions in the social sciences or philosophy. Yet, few seem to consider such illiteracies to be equally worrisome barriers to a well-functioning democracy. In any case, as political scientists Joseph Morone and Edward Woodhouse argue, the position of the public is not to evaluate complex or arcane technoscientific problems directly but to decide which experts to trust to do so. Citizens, according to Morone and Woodhouse, were quite reasonable to turn against nuclear power when overoptimistic safety estimates were proven wrong by a series of public blunders, including accidents at Three Mile Island and Chernobyl, as well as increasing levels of disagreement among experts. Citizens’ lack of understanding of nuclear physics was beside the point: The technology was oversold and overhyped. The public now had good grounds to believe that experts were not approaching nuclear energy or their risk assessments responsibly. Contrary to the assumptions of deficit modelers, legitimacy is not earned simply through technical expertise but via sociopolitical demonstrations of trustworthiness. If technoscientific experts were to really care about democracy, they would think more deeply about how they could better earn legitimacy in the eyes of the public. At the very least, research in science and technology studies provides some guidance on how they ought not to proceed. For example, after post-Chernobyl accident radiation rained down on parts of Cumbria, England, scientists quickly moved in to study the effects as well as ensure that irradiated livestock did not get moved out of the area. Their behavior quickly earned them the ire of local farmers. Scientists not only ignored the relevant expertise that farmers had regarding the problem but also made bold pronouncements of fact that were later found to be false, including the claim that the nearby Sellafield nuclear processing plant had nothing to do with local radiation levels. The certainty with which scientists made their uncertain claims as well as their unwillingness to respond to criticism by non-scientists led farmers to distrust them. The scientists lost legitimacy as local citizens came to believe that they were sent there by the national government to stifle inquiry into what was going on rather than learn the facts of the matter. Far too many technoscientists (or at least their associated cheerleaders in popular media) seem content to repeat the mistakes of these Cumbrian radiation scientists. “Take your concerns elsewhere. The experts are talking,” they seem to say when non-experts raise concerns, “Come back when you’ve got a science degree.” Ironically (and tragically), experts’ embrace of deficit model understandings of public scientific controversies undermines the very mechanisms by which legitimacy is established. If the problem is really a deficit of public trust, diminishing the transparency of decisions and eliminating possibilities for citizen participation is self-defeating. Anything looking like a constructive and democratic resolution to controversies like GMOs, fracking, or nuclear energy is only likely to happen if experts engage with and seek to understand popular opposition. Only then can they begin to incrementally reestablish trust. Insofar as far too many scientists and other experts believe they deserve public legitimacy simply by their credentials – and some even denigrate lay citizens as ignorant rubes – public scientific controversies are likely to continue to be polarized and pathological. 5/15/2014 What Was Step Two Again? Underpants Gnomes and the Technocratic Theory of Progress.Read Now Repost from TechnoScience as if People Mattered Far too rarely do most people reflect critically on the relationship between advancing technoscience and progress. The connection seems obvious, if not “natural.” How else would progress occur except by “moving forward” with continuous innovation? Many, if not most, members of contemporary technological civilization seem to possess an almost unshakable faith in the power of innovation to produce an unequivocally better world. Part of the purpose of Science and Technology Studies (STS) scholarship is to precisely examine if, when, how and for whom improved science and technology means progress. Failing to ask these questions, one risks thinking about technoscience like how underpants gnomes think about underwear. Wait. Underpants gnomes? Let me back up for second. The underpants gnomes are characters from the second season of the television show South Park. They sneak into people’s bedrooms at night to steal underpants, even the ones that their unsuspecting victims are wearing. When asked why they collect underwear, the gnomes explain their “business plan” as follows: Step 1) Collect underpants, Step 2) “?”, Step 3) Profit! The joke hinges on the sheer absurdity of the gnomes’ single-minded pursuit of underpants in the face of their apparent lack of a clear idea of what profit means and how underpants will help them achieve it. Although this reference is by now a bit dated, these little hoarders of other people’s undergarments are actually one of the best pop-culture illustrations of the technocratic theory of progress that often undergirds people’s thinking about innovation. The historian of technology Leo Marx described the technocratic idea of progress as: "A belief in the sufficiency of scientific and technological innovation as the basis for general progress. It says that if we can ensure the advance of science-based technologies, the rest will take care of itself. (The “rest” refers to nothing less than a corresponding degree of improvement in the social, political, and cultural conditions of life.)" The technocratic understanding of progress amounts to the application of underpants gnome logic to technoscience: Step 1) Produce innovations, Step 2) “?”, Step 3) Progress! This conception of progress is characterized by a lack of a clear idea of not only what progress means but also how amassing new innovations will bring it about.

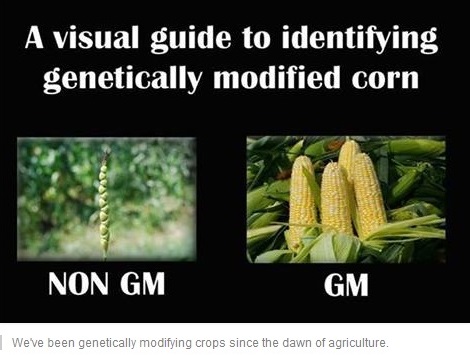

The point of undermining this notion of progress is not to say that improved technoscience does not or could not play an important role in bringing about progress but to recognize that there is generally no logical reason for believing it will automatically and autonomously do so. That is, “Step 2” matters a great deal. For instance, consider the 19th century belief that electrification would bring about a radical democratization of America through the emancipation of craftsmen, a claim that most people today will recognize as patently absurd. Given the growing evidence that American politics functions more like an oligarchy than a democracy, it would seem that wave after wave of supposedly “democratizing” technologies – from television to the Internet – have not been all that effective in fomenting that kind of progress. Moreover, while it is of course true that innovations like the polio vaccine, for example, certainly have meant social progress in the form of fewer people suffering from debilitating illnesses, one should not forget that such progress has been achievable only with the right political structures and decisions. The inventor of the vaccine, Jonas Salk, notably did not attempt to patent it, and the ongoing effort to eradicate polio has entailed dedicated global-level organization, collaboration and financial support. Hence, a non-technocratic civilization would not simply strive to multiply innovations under the belief that some vague good may eventually come out of it. Rather, its members would be concerned with whether or not specific forms of social, cultural or political progress will in fact result from any particular innovation. Ensuring that innovations lead to progress requires participants to think politically and social scientifically, not just technically. More importantly, it would demand that citizens consider placing limits on the production of technoscience that amounts to what Thoreau derided as “improved means to unimproved ends.” Proceeding more critically and less like the underpants gnomes means asking difficult and disquieting questions of technoscience. For example, pursuing driverless cars may lead to incremental gains in safety and potentially free people from the drudgery of driving, but what about the people automated out of a job? Does a driverless car mean progress to them? Furthermore, how sure should one be of the presumption that driverless cars (as opposed to less automobility in general) will bring about a more desirable world? Similarly, how should one balance the purported gains in yield promised by advocates of contemporary GMO crops against the prospects for a greater centralization of power within agriculture? How much does corn production need to increase to be worth the greater inequalities, much less the environmental risks? Moreover, does a new version of the iPhone every six months mean progress to anyone other than Apple’s shareholders and elite consumers? It is fine, of course, to be excited about new discoveries and inventions that overcome previously tenacious technical problems. However, it is necessary to take stock of where such innovations seem to lead. Do they really mean progress? More importantly, whose interests do they progress and how? Given the collective failure to demand answers to these sorts of questions, one has good reason to wonder whether technological civilization really is making progress. Contrary to the vision of humanity being carried up to the heavens of progress upon the growing peaks of Mt. Innovation, it might be that many of us are more like underpants gnomes dreaming of untold and enigmatic profits amongst piles of what are little better than used undergarments. One never knows unless one stops collecting long enough to ask, “What was step two again?” 5/5/2014 Don't Drink the GMO Kool-Aid: Continuity Arguments and Controversial TechnoScienceRead Now Repost from TechnoScience as if People Mattered Far too much popular media and opinion is directed toward getting people to “Drink the Kool-Aid” and uncritically embrace controversial science and technology. One tool from Science and Technology Studies (STS) toolbox that can help prevent you from too quickly taking a swig is the ability to recognize and take apart continuity arguments. Such arguments take the form: Contemporary practice X shares some similarities with past practice Y. Because Y is considered harmless, it is implied that X must also be harmless. Consider the following meme about genetically modified organisms (GMO’s) from the "I Fucking Love Science" Facebook feed: This meme is used to argue that since selective breeding leads to the modification of genes, it is not significantly distinct from altering genetic code via any other method. The continuity of “modifying genetic code” from selective breeding to contemporary recombinant DNA techniques is taken to mean that the latter is no more problematic than the former. This is akin to arguing that exercise and proper diet is no different from liposuction and human-growth-hormone injections: They are all just techniques for decreasing fat and increasing muscle, right?

Continuity arguments, however, are usually misleading and mobilized for thinly veiled political reasons. As certain technology ethicists argue, “[they are] often an immunization strategy, with which people want to shield themselves from criticism and to prevent an extensive debate on the pros and cons of technological innovations.” Therefore, it is unsurprising to see continuity arguments abound within disagreements concerning controversial avenues of scientific research or technological innovation, such as GMO crops. Fortunately for you dear reader, they are not hard to recognize and tear apart. Here are the three main flaws in reasoning that characterize most continuity arguments. To begin, continuity arguments attempt to deflect attention away from the important technical differences that do exist. Selective breeding, for example, differs substantively from more recent genetic modification techniques. The former mimics already existing evolutionary processes: The breeder artificially creates an environmental niche for certain valued traits ensure survival by ensuring the survival and reproduction of only the organisms with those traits. Species, for the most part, can only be crossed if they are close enough genetically to produce viable offspring. One need not be an expert in genetic biology to recognize how the insertion of genetic material from very different species into another via retroviruses or other techniques introduces novel possibilities, and hence new uncertainties and risks, into the process. Second, the argument presumes that the past technoscientific practices being portrayed as continuous with novel ones are themselves unproblematic. Although selective breeding has been pretty much ubiquitous in human history, its application has not been without harm. For instance, those who oppose animal cruelty often take exception to the creation, maintenance and celebration of “pure” breeds. Not only does the cultural production of high status, expensive pedigrees provide a financial incentive for “puppy mills” but also discourages the adoption of mutts from shelters. Moreover, decades or centuries of inbreeding have produced animals that needlessly suffer from multiple genetic diseases and deformities, like boxers that suffer from epilepsy. Additionally, evidence is emerging that historical practices of selective breeding of food crops has rendered many of them much less nutritious. This, of course, is not news to “foodies” who have been eschewing iceberg lettuce and sweet onions for arugula and scallions for years. Regardless, one need not look far to see that even relatively uncontroversial technoscience has its problems. Finally and most importantly, continuity arguments assume away the environmental, social, and political consequences of large-scale sociotechnical change. At stake in the battle over GMO’s, for instance, are not only the potential unforeseen harms via ingestion but also the probable cascading effects throughout technological civilization. There are worries that the overliberal use of pesticides, partially spurred by the development of crops genetically modified to be tolerant of them, is leading to “superweeds” in the same way that the overuse of antibiotics lead to drug-resistant “superbugs.” GMO seeds, moreover, typically differ from traditional ones in that they are designed to “terminate” or die after a period of time. When that fails, Monsanto sues farmers who keep seeds from one season to the next. This ensures that Monsanto and other firms become the obligatory point of passage for doing agriculture, keeping farmers bound to them like sharecroppers to their landowner. On top of that, GMO’s, as currently deployed, are typically but one piece of a larger system of industrialized monoculture and factory farms. Therefore, the battle is not merely about the putative safety of GMO crops but over what kind of food and farm culture should exist: One based on centralized corporate power, lots of synthetic pesticides, and high levels of fossil fuel use along with low levels of biodiversity or the opposite? GMO continuity arguments deny the existence of such concerns. So the next time you hear or read someone claim that some new technological innovation or area of science is the same as something from the past: such as, Google Glasses not being substantially different from a smartphone or genetically engineering crops to be Roundup-ready not being significantly unlike selectively breeding for sweet corn, stop and think before you imbibe. They may be passing you a cup of Googleberry Blast or Monsanto Mountain Punch. Fortunately, you will be able to recognize the cyanide-laced continuity argument at the bottom and dump it out. Your existence as a critical thinking member of technological civilization will depend on it. During debates about some contemporary scientific controversy, such as GMO foods or the effects of climate change, someone almost invariably declares at some point to be on the “right side” of science. Opponents, accordingly, are implied to be either hopeless biased or under the spell of some form of pseudoscientific legerdemain. Confronted by just such an argument this week during a discussion over Elizabeth Warren’s vote against mandating the labeling of GMO ingredients, I was mostly struck by how profoundly unscientific and ignorant of the actual functioning of science and politics this rhetorical move is.

In order to avoid overstating my case, I should make clear that some knowledge claims are fairly straightforward and obvious cases of pseudoscience. Although philosophy of science has yet to develop unproblematic criteria for demarcating science from pseudoscience, the line between scientific approaches to inquiry and pseudoscientific ideology can be fuzzily drawn around such practices and dispositions as the willingness of practitioners to subject their claims to scrutiny or admit limitations to the theories they develop. Pyramid power and astrology are typical, though somewhat trivial, examples. The labels “scientific” and “pseudoscientific,” however, are best thought of as ideal types; the behaviors of most inquirers usually lie somewhere in between, and this is normally not a problem. Decades ago Ian Mitroff demonstrated the diversity of inquiry styles used practicing scientists. Science requires many types of researchers for its dynamism, from hardliner empiricists to armchair bound synthesizers and theoreticians – who may play more fast and loose with existing data. It is a social process that seems better characterized by the continual raising of new questions, evermore highlighting new uncertainties, complexities and limits to understanding, than the establishment of enduring and incontrovertible facts. Theories can almost always be refined or subjected to new challenges; data is invariably reinterpreted as new ideas and instruments are developed. At the same time, respected and successful scientists are generally not the exemplars of objectivity typically depicted in popular media, having pet theories and engaging in political wrangling with opponents. It is in light of this characterization of science that makes claims to being on the "right side of science" so troubling. The way the word “fact” is used attempts to transform the particular conclusion of scientific study from tentative conjecture based on incomplete data analyzed via inevitably imperfect techniques and technologies into something incontrovertible and unchallengeable. Even worse, it shuts down further inquiry, and there can be nothing more profoundly unscientific and epistemologically stale than eliminating the possibility for further questions or denying the inherent uncertainty and fallibilism of human claims to truth. Recognition of this, however, is frequently thrown out the window during the moments of controversy. Some opponents of GMO labeling contend that doing so automatically implies that genetically modified ingredients are harmful and lends credence to what they see as pseudoscientific fear mongering concerning their potential effects of human health. The person I was arguing with believed that the absence of what he considered to be a “strong” linkage between human or animal well-being and GMO food in the decades since their introduction rendered their safety a scientific “fact.” To begin, it is specious reasoning to assume that the absence of evidence is automatically evidence of absence. The presumption that the current state of research already adequately explored all the risks associated with a particular technology is dangerous and should not be made lightly. The historical record is full technologies, such as pesticides (DDT), medicines (Vioxx) or industrial chemicals (BPA), at one time thought to be safe and discovered to be dangerous only after put into widespread use. It is incredibly risky to project the universality of a particular present finding into the foreseeable future – when available methods, data and knowledge will likely be more sophisticated than in the present. Furthermore, it is incredibly narrow-minded to assume that it is only the potential health risks posed by the ingestion of GMO’s by individual consumers that we should be worried about. Any technology, like the manipulation of recombinant DNA, is part and parcel of a larger sociotechnical system. GMO foods are, for the foreseeable future, intertwined with particular ways of farming (industrial scale monoculture), certain economic arrangements (farmers utterly dependent on biotech firms like Monsanto) and specific ways of conceiving how human beings should relate to nature and food (as a pure commodity). Citizens may be legitimately concerned about any or all of the above facets of GMO food as a technology; many of these concerns, clearly, cannot be answered or done away with by conducting a scientific experiment. Regardless, the claim that science is on one’s side also fails to recognize how scientific studies are scrutinized in imbalanced ways and doubt manufactured when politically useful. Nowhere is this more apparent than in the controversy surrounding Seralini’s study purporting to find a link between cancer and the ingestion of GMO and RoundUp treated corn. As numerous ensuing commentaries point out, the connections drawn in the paper remain uncertain and the experimental design seemed to lack statistical power. Yet, many critics claimed the study was rubbish for its “nonstandard” methodological choices, even though they used many of the exact same methods as industry research claiming to demonstrate the safety of GMO food. My point is not to claim whether or not the effects observed by Seralini’s team is real or not but to note that scientists and various pundit are often incredibly inconsistent in their judgments of the flaws of a particular study or result. Imperfections tolerated in other studies seem to conveniently render controversial studies pseudoscientific when the results are incompatible with the critic’s other sociopolitical commitments, like the association of “progress” with the increasing application of biotechnology to food production, or powerful political interests. More broadly, the desire to be on the “right side of the facts” in controversial areas often takes on the form of a fetish. Such thinking seems founded on the hope that science can free humanity of the anxieties inherent in doing politics, which I think is best framed as the process of deciding how to organize civilization in the face of uncertainty, diversity and complexity. If a particular way of designing our collective lives can become enshrined in “fact,” than we no longer have to subject the choice to the messiness of democratic decision making or pursue the reconciliation of different interests and ideas about how human beings ought to live. Yet, if a particular scientific result is, at its best, something we can be only tentatively certain about and, at its worst, a falsehood only temporarily propped up by a constellation of inadequate theorizing, techniques and methodologies – or even cultural bias or outright fabrication, it would seem that science is generally not up to the task of freeing humanity from the need for politics. This point leads to one of the main problems with the way people tend to talk about “scientific controversies:” It is premised on a false dichotomy. Politics and good science are often taken to be polar opposites. It seems to presume that politics is the stuff of mere opinion and emotion and outside the realm of genuine inquiry. Such a dichotomy, to me, seems to do damage to our understandings of both of politics and science. The qualities celebrated in idealized versions of scientists – openness to new ways of thinking, self-reflective criticality and so on – seem to be qualities also befitting of political citizenship. At the same time, the assumption that science is the realm of absolute certainties and falsehoods – rather than the messy muddling through of various complexities, uncertainties and ignorances – leads to an interpretation of scientific findings that many practicing scientists themselves would not condone. The greatest challenges facing technological civilization are best met through inquiry, debate and the recognition of human ignorance, not blind faith in some naïve, fairy-tale understanding of science and fact. To presume that it is more "objective" or rational to have the opinions and arguments of a particular set of men and women wearing lab coats carry the most weight in deciding our collective futures is to simply smuggle in one set of interests and ideas about the good under the guise of “just siding with the facts.” Even worse, it fails to comprehend the partially social character of fact production and the inherent fallibility of human knowledge. An understanding of politics more befitting of those claiming a “scientific outlook” on reality would recognize that citizens and decision makers are inexorably locked in conflict-ridden processes of juggling facts, interests and ideas about the good life, all fraught with uncertainty. When more participants in a scientific controversy understand this, perhaps then we can have a more fruitful public dialogue about GMO foods or natural gas hydrofracking. Note: I have to give credit to Canadian musician Danny Michel for the inspiration for the title of this post: "If God's on Your Side Than Who's on Mine?" |

Details

AuthorTaylor C. Dotson is an associate professor at New Mexico Tech, a Science and Technology Studies scholar, and a research consultant with WHOA. He is the author of The Divide: How Fanatical Certitude is Destroying Democracy and Technically Together: Reconstructing Community in a Networked World. Here he posts his thoughts on issues mostly tangential to his current research. Archives

July 2023

Blog Posts

On Vaccine Mandates Escaping the Ecomodernist Binary No, Electing Joe Biden Didn't Save American Democracy When Does Someone Deserve to Be Called "Doctor"? If You Don't Want Outbreaks, Don't Have In-Person Classes How to Stop Worrying and Live with Conspiracy Theorists Democracy and the Nuclear Stalemate Reopening Colleges & Universities an Unwise, Needless Gamble Radiation Politics in a Pandemic What Critics of Planet of the Humans Get Wrong Why Scientific Literacy Won't End the Pandemic Community Life in the Playborhood Who Needs What Technology Analysis? The Pedagogy of Control Don't Shovel Shit The Decline of American Community Makes Parenting Miserable The Limits of Machine-Centered Medicine Why Arming Teachers is a Terrible Idea Why School Shootings are More Likely in the Networked Age Against Epistocracy Gun Control and Our Political Talk Semi-Autonomous Tech and Driver Impairment Community in the Age of Limited Liability Conservative Case for Progressive Politics Hyperloop Likely to Be Boondoggle Policing the Boundaries of Medicine Automating Medicine On the Myth of Net Neutrality On Americans' Acquiescence to Injustice Science, Politics, and Partisanship Moving Beyond Science and Pseudoscience in the Facilitated Communication Debate Privacy Threats and the Counterproductive Refuge of VPNs Andrew Potter's Macleans Shitstorm The (Inevitable?) Exportation of the American Way of Life The Irony of American Political Discourse: The Denial of Politics Why It Is Too Early for Sanders Supporters to Get Behind Hillary Clinton Science's Legitimacy Problem Forbes' Faith-Based Understanding of Science There is No Anti-Scientism Movement, and It’s a Shame Too American Pro Rugby Should Be Community-Owned Why Not Break the Internet? Working for Scraps Solar Freakin' Car Culture Mass Shooting Victims ARE on the Rise Are These Shoes Made for Running? Underpants Gnomes and the Technocratic Theory of Progress Don't Drink the GMO Kool-Aid! On Being Driven by Driverless Cars Why America Needs the Educational Equivalent of the FDA On Introversion, the Internet and the Importance of Small Talk I (Still) Don't Believe in Digital Dualism The Anatomy of a Trolley Accident The Allure of Technological Solipsism The Quixotic Dangers Inherent in Reading Too Much If Science Is on Your Side, Then Who's on Mine? The High Cost of Endless Novelty - Part II The High Cost of Endless Novelty Lock-up Your Wi-Fi Cards: Searching for the Good Life in a Technological Age The Symbolic Analyst Sweatshop in the Winner-Take-All Society On Digital Dualism: What Would Neil Postman Say? Redirecting the Technoscience Machine Battling my Cell Phone for the Good Life Categories

All

|

RSS Feed

RSS Feed